What Evaluators Are Really Looking For In Your Tender Response

Have you ever read tender feedback and thought, “We said all of that, so why didn’t it score?”

If bids drive your revenue, that question is expensive. A solid service can still lose when the answer is hard to mark, hard to trust, or hard to follow.

How does tender response evaluation work?

Tender response evaluation is the process buyers use to score your answers against their published criteria. In simple terms, evaluators check four things fast: did you answer the question, show a clear method, prove your claims, and reduce risk?

They are not scoring effort, reputation, or how late your team stayed up finishing the draft. They score what they can see, match, and justify. That’s why clear structure matters as much as strong delivery.

What evaluators notice before they read your response in full

Most evaluators do not read your response in full when they first open it; they’ll scan it first.

That first pass is about orientation. They check the question, the scoring points, the word limit, and whether your answer is easy to follow. Current GOV.UK guidance on assessing competitive tenders makes clear that buyers must assess against the published method. Still, fairness does not mean they’ll go digging for your best point.

They’re looking for a clean route through the answer. Can they spot your method? Can they see who does what? Is there proof near the claim, or hidden three pages later? If they have to search, your marks start slipping before the detail has had a chance.

A good response lowers effort for the marker. It mirrors the question. It answers early. It makes evidence easy to find.

Evaluators don’t award marks for effort. They award marks for answers they can score with confidence.

If you want to understand that opening scan in more detail, this guide on UK tender evaluation in the first 90 seconds is well worth a read.

Why this matters when your team is already stretched

For most SMEs, bids don’t land into a calm, empty diary. They land in the middle of delivery, staff issues, reporting deadlines, and the usual Tuesday nonsense.

That’s why near-miss losses sting. You know you can do the job. Yet the feedback says “unclear”, “limited evidence”, or “insufficient detail”. Those comments often point to fixable writing and review issues, not weak delivery.

This matters because evaluators are not there to interpret your intention. They won’t reward what you meant to say. They reward what the page makes obvious. So when a strong team undersells itself on paper, the business pays twice, once in lost revenue and again in wasted effort.

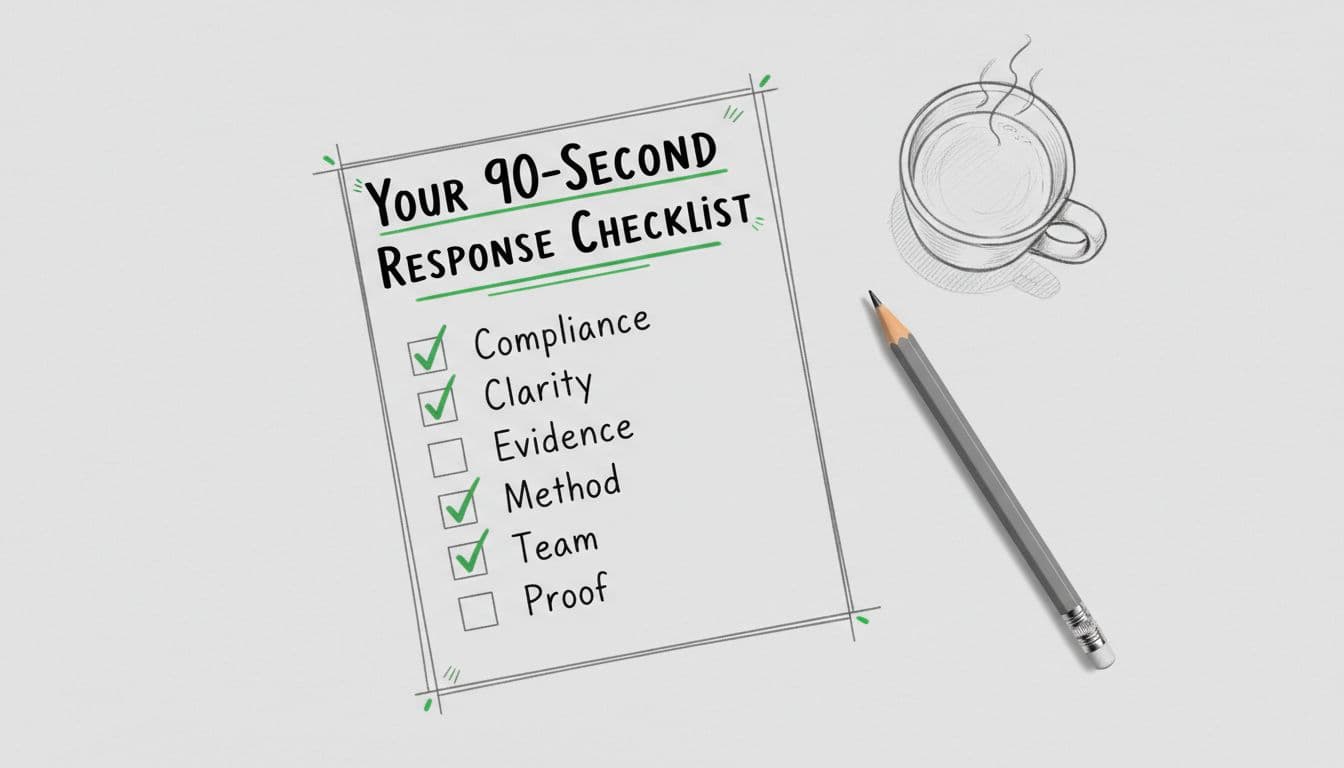

A tender response checklist you can use today

Use this before final review. Think of it like labelling drawers, the content may be strong, but it still needs to be findable.

→ Start with the answer in the first two lines, not the backstory.

→ Mirror the wording of the question in your headings.

→ Break your method into steps that feel real and workable.

→ Name roles, owners, and who makes decisions.

→ Put proof beside the claim, not in a separate appendix or towards the end of the response.

→ Use dates, volumes, response times, and clear measures.

→ Show the benefit to the buyer, not only your activity.

→ Signpost attachments so the evaluator knows why they matter.

→ Cover every sub-part of the question, even the small ones.

A response that feels calm, concrete, and complete is easier to trust.

Common mistakes that lose marks

A wall of text makes the evaluator work too hard. Generic claims such as “high-quality” or “robust” sound nice, but without proof they carry no weight. Copying old content can be another issue. It saves time upfront, but generally costs marks during the evaluation because its answering a different question.

Another common issue is mismatch. Your method promises a gold-standard service, but the staffing, timelines, or pricing suggest something thinner. Evaluators notice that fast as they’re reading for risk as much as quality.

The final trap is not proof reading or reviewing the content. A grammar check is not enough nowadays, especially with AI in the game. You need a scoring check.

This old bid evaluation guidance note still gives a helpful reminder on clarity, consistency, and record keeping. For more examples, see these common bid proposal mistakes that often sink otherwise capable submissions.

Make it easy to award you the marks

Evaluators want answers they can follow, score, and defend. If your draft makes them hunt, doubt, or translate, marks fall away.

If your team writes bids in-house, an expert review can lift results without taking control away. If Bid Win Rate Accelerator Training or a bid review retainer feels like the right next step, you can explore our bid review and training options here or book a fit check call.

FAQs

Meet the Author

Melissa is the founder of Bidsmithery™ with over 15 years of experience across bid writing, bid management and evaluation. Having sat on both sides of the process as both writer and evaluator, she works across sectors because great bids follow the same principles wherever you’re tendering. With more than £103M in contracts secured, she specialises in framework bids and strategic bid reviews helping organisations sharpen their approach when it really counts.